Learning Trajectory-Attractor Dynamical Systems on SE(3) with Conditional Diffeomorphisms

Abstract: Dynamical system (DS)-based imitation learning enables stable motion generation. However, in practice, most methods (i) mainly guarantee convergence to a goal state rather than attraction to the demonstrated trajectory, (ii) are developed primarily in Euclidean spaces and thus do not natively model pose trajectories in SE(3), and (iii) are typically limited to a single demonstrated task with weak perception-driven adaptation. To address these limitations, this paper presents a Lie-group DS learning framework composed of Trajectory-Attractor DS (TADS) and Conditional DS Generation (CDSG). TADS couples a latent-space potential field with multiple canyon structures and a diffeomorphism, achieving global asymptotic stability and disturbance-rejecting execution with trajectory-attractor behavior, while requiring only one demonstration per attracting-trajectory direction. Built on TADS, CDSG conditions the diffeomorphism on multimodal observations to generate task-consistent stable DS online, enabling perception-driven replanning and flexible trajectory switching while preserving the stability guarantees. Simulations and real-robot experiments demonstrate robust execution under disturbances and effective adaptation to changing environments.

GitHub Code Link:click here

Method Diagrams

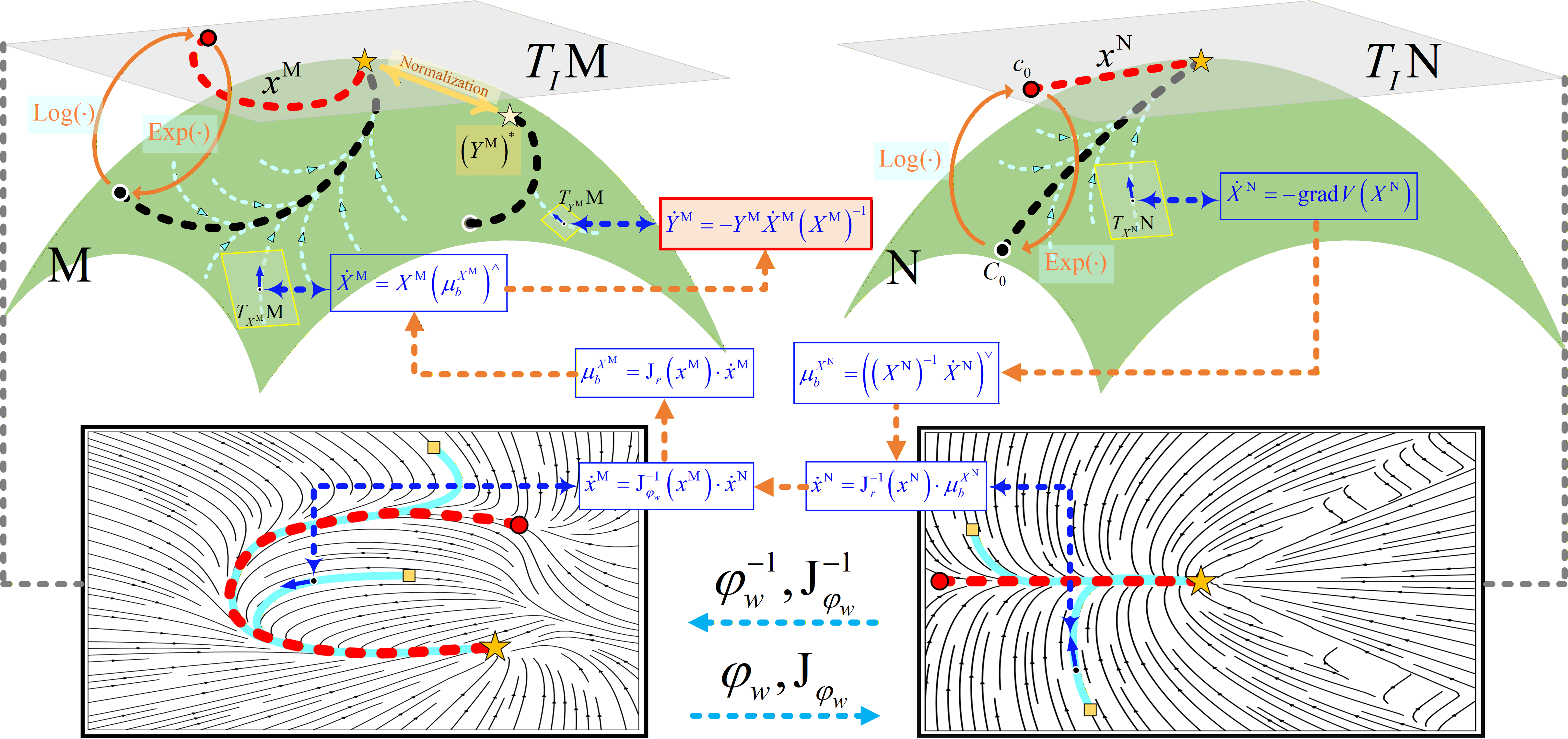

Principle of TADS in Lie-group space based on diffeomorphism

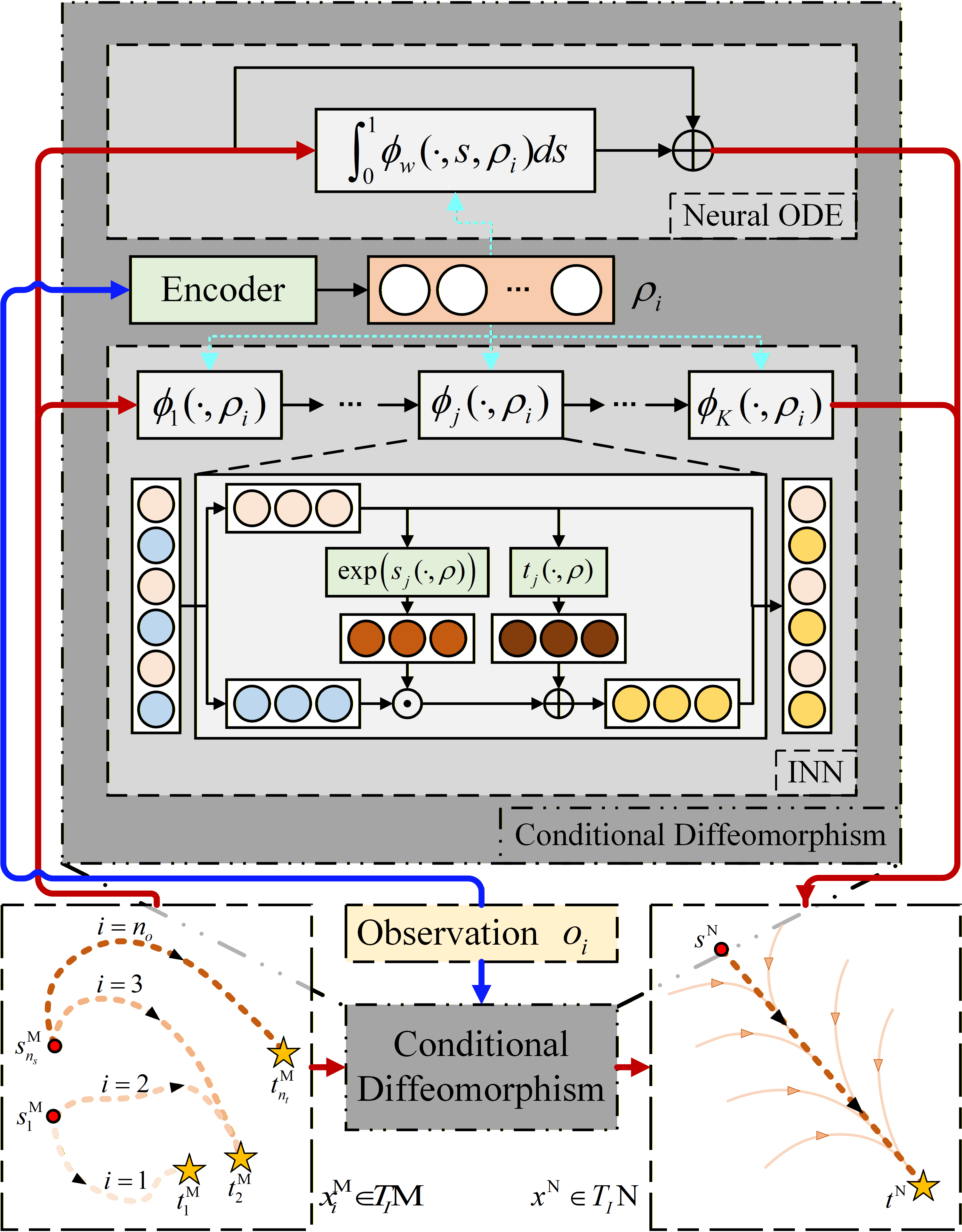

Principle of CDSG based on TADS